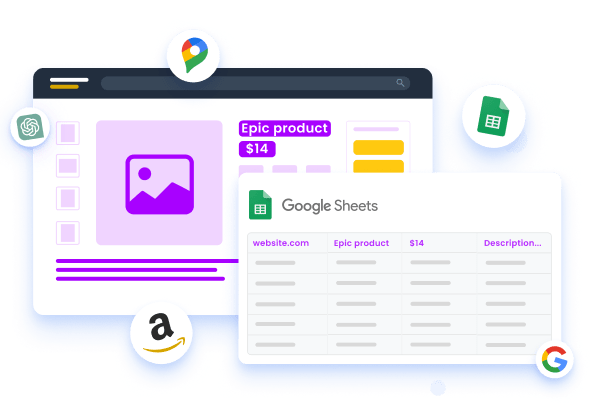

Information Crawling Vs Information Scratching: Whats The Difference? Internet spiders are automated software programs that search the internet and methodically accumulate data from websites. The procedure commonly involves following hyperlinks from one web page to an additional, and indexing the web content of each web page for later usage. Crawling involves accumulating information from multiple internet sites or web pages. While information scraping is concentrated on certain aspects on a single websites. For instance, you can write a straightforward Python script to instantly go to a large number of sites and collect information using the requests library. The complexity of the code made use of in web scuffing and web crawling likewise differs. Web scraping typically needs extra complex code as it involves interacting with an internet site's HTML and removing certain aspects. This commonly involves making use of libraries such as BeautifulSoup or Scrapy in Python, or devices like Octoparse for scratching internet sites. So initially you create a spider which will certainly result all the web page Links that you appreciate - it can be web pages that are in a specific group on the site or in details https://www.gamespot.com/profile/kittanxmep/ components of the internet site.

- It may include spread sheets, storage space tools, and so on, anywhere, where information is present in any kind of kind.The vital distinction between internet scuffing and information scraping is that web scratching occurs solely online.Yet the best part is that PDF files provide password security, which is a have to when taking care of delicate client information and essential company papers.With scraping you generally understand the target internet sites, you may not recognize the certain web page Links, but you understand the domains at least.

How Web Scrapers Function

Nevertheless, web scratching can be done manually without the assistance of a spider. On the other hand, a web spider is usually come with by scuffing to strain unnecessary details. One of one of the most challenging things in the web crawling space is to handle the coordination of succeeding crawls. Our crawlers have to be polite with the servers to make sure that they do not piss them off when struck. Over time, our crawlers have to obtain more intelligent (and not insane!).Not Even the Ghost of Obsolescence Can Coerce Users Onto ... - Slashdot

Not Even the Ghost of Obsolescence Can Coerce Users Onto ....

Posted: Mon, 09 Oct 2023 07:00:00 GMT [source]

Web Crawling Devices

Scrapes do not have to fret about being polite or adhering to any kind of honest rules. Crawlers, however, need to ensure that they are courteous to the servers. They need https://www.mediafire.com/file/k4jw5f5l6gl6r8n/32984.pdf/file to run in a fashion such that they do not anger the servers, and need to be dexterous enough to remove all the details required. Usually, this information obtains duplicated, and multiple web pages end up having the same information. While the robots don't have any type of methods of identifying this replicate details, eliminating the exact same data is necessary. Therefore, data de-duplication ends up being a part of web crawling.Data Blending: Manage Your Data Efficiently and Cost-Effectively - insideBIGDATA

Data Blending: Manage Your Data Efficiently and Cost-Effectively.

Posted: Fri, 01 Sep 2023 07:00:00 GMT [source]